Regularizers¶

Regularization in PyKEEN.

Classes¶

|

A simple L_p norm based regularizer. |

|

A regularizer which does not perform any regularization. |

|

A convex combination of regularizers. |

|

A simple x^p based regularizer. |

|

A regularizer for the soft constraints in TransH. |

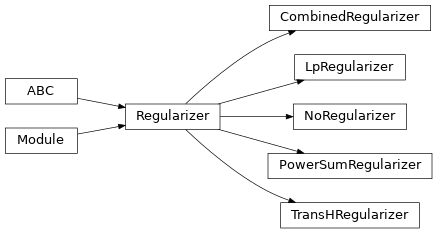

Class Inheritance Diagram¶

Base Classes¶

-

class

Regularizer(weight=1.0, apply_only_once=False, parameters=None)[source]¶ A base class for all regularizers.

Instantiate the regularizer.

- Parameters

weight (

float) – The relative weight of the regularizationapply_only_once (

bool) – Should the regularization be applied more than once after reset?parameters (

Optional[Iterable[Parameter]]) – Specific parameters to track. if none given, it’s expected that your model automatically delegates to theupdate()function.

-

apply_only_once: bool¶ Should the regularization only be applied once? This was used for ConvKB and defaults to False.

-

abstract

forward(x)[source]¶ Compute the regularization term for one tensor.

- Return type

FloatTensor

-

classmethod

get_normalized_name()[source]¶ Get the normalized name of the regularizer class.

- Return type

-

hpo_default: ClassVar[Mapping[str, Any]]¶ The default strategy for optimizing the regularizer’s hyper-parameters

-

pop_regularization_term()[source]¶ Return the weighted regularization term, and reset the regularize afterwards.

- Return type

FloatTensor

-

regularization_term: torch.FloatTensor¶ The current regularization term (a scalar)

-

property

term¶ Return the weighted regularization term.

- Return type

FloatTensor

-

weight: torch.FloatTensor¶ The overall regularization weight